A Critical AI Tool Was Secretly Hijacked To Hack Servers

By 813 Staff

Engineers and executives are reacting to A Critical AI Tool Was Secretly Hijacked To Hack Servers, according to The Hacker News (@TheHackersNews) (on March 24, 2026).

Source: https://x.com/TheHackersNews/status/2036511024975847556

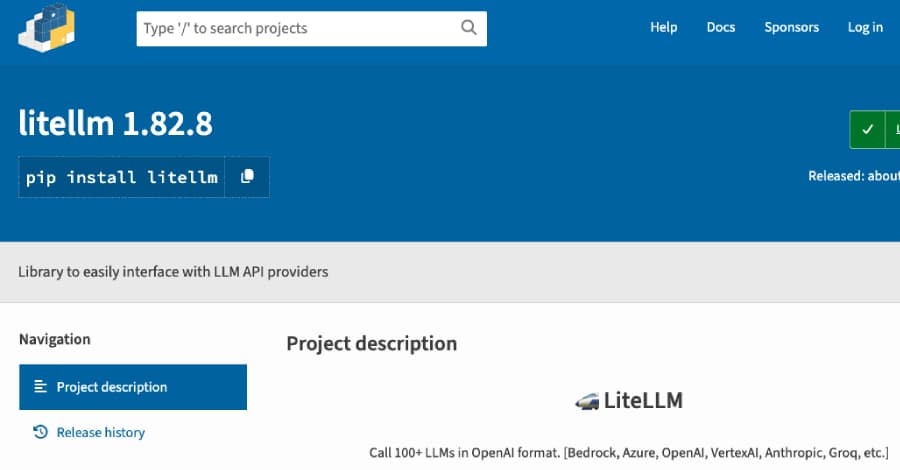

The initial alert from the maintainers of LiteLLM was a model of calm, urging users to upgrade from two specific versions. But internal documents circulating among incident response teams at major AI labs and cloud providers tell a far more urgent story, detailing a meticulously crafted supply chain attack that weaponized a trusted tool for AI developers. According to a report from The Hacker News (@TheHackersNews), malicious versions 1.82.7 and 1.82.8 of the popular LiteLLM library—a universal adapter that lets developers standardize calls to various large language models from OpenAI, Anthropic, and others—were engineered to deploy credential theft and Kubernetes lateral movement capabilities. The rollout of these tainted packages, which have since been taken down, has been anything but smooth, leaving security engineers scrambling to assess the blast radius.

The attack’s sophistication is what has the industry’s attention. Engineers close to the project say the compromised library was designed to exfiltrate API keys and cloud credentials from environment variables and configuration files. More alarmingly, it contained scripts specifically crafted for lateral movement within Kubernetes clusters, a clear indication that the threat actors were targeting enterprise deployments where LiteLLM is used to manage high-value, paid AI model calls. This isn’t a simple cryptojacker; it’s a targeted campaign aimed at intellectual property and computational resources. For any team using LiteLLM to proxy their AI workloads, the immediate risk extends beyond a single compromised server to the potential breach of an entire containerized environment.

The immediate consequence is a massive erosion of trust in a key piece of the modern AI development stack. LiteLLM is ubiquitous in startups and internal tooling because it abstracts away the differences between model providers. This very utility made it a perfect, high-value target. The incident forces a brutal reassessment of open-source dependency hygiene, especially for tools that handle sensitive credentials and sit in critical orchestration paths. Every engineering lead is now asking their teams the same question: were we running the poisoned versions, and where did our keys go?

What happens next involves a painful triage. Security firms are now reverse-engineering the malicious payloads to generate definitive indicators of compromise, while affected companies must rotate every API key and credential that was present in systems running the bad versions. The long-term uncertainty lies in the attack’s origin. The maintainers have not yet detailed how the package was compromised—whether through a hijacked maintainer account or a sophisticated dependency confusion attack. Until that root cause is fully disclosed and remediated, the community’s confidence in this essential library will remain fractured, a stark reminder that the AI infrastructure layer has become the new high-stakes battlefield.

Source: https://x.com/TheHackersNews/status/2036511024975847556