OpenAI's New AI Agent Hunts Hackers And Patches Critical Flaws

By 813 Staff

A major product shift is underway — OpenAI's New AI Agent Hunts Hackers And Patches Critical Flaws, according to The Hacker News (@TheHackersNews) (tonight).

Source: https://x.com/TheHackersNews/status/2030329542351765956

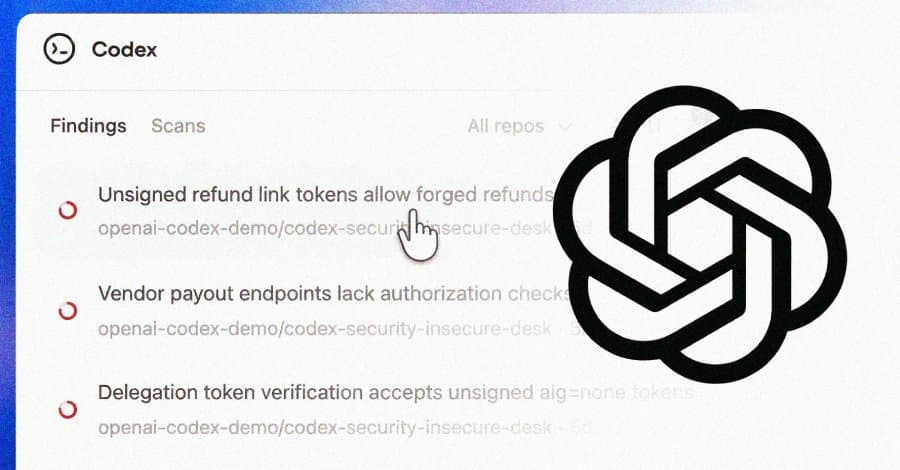

When OpenAI’s chief product officer finally approved the launch of Codex Security last week, the directive was clear: release the AI agent that autonomously finds and fixes software vulnerabilities, but do it quietly. The decision, made during a closed-door review of escalating software supply chain attacks, was a strategic pivot from pure code generation to active defense. Internal documents show the company had been developing the security-focused agent for over eighteen months under the project name “Guardian,” but accelerated its release following several high-profile breaches traced to common, unpatched flaws in open-source dependencies. The official announcement, noted by the cybersecurity outlet The Hacker News (@TheHackersNews), marks OpenAI’s most direct entry into the enterprise cybersecurity market to date.

The product itself is an evolution of the underlying Codex model. It functions as an active agent that integrates directly into a developer’s environment and CI/CD pipeline, scanning code in real-time, identifying vulnerabilities ranging from simple buffer overflows to complex logical flaws, and then proposing—and, with approval, applying—corrected code. Early technical briefings suggest it goes beyond static analysis by understanding the intent of code blocks and simulating potential exploit paths. For development teams, the promise is a significant reduction in the window between vulnerability introduction and remediation, potentially shrinking it from weeks to hours.

However, the rollout has been anything but smooth. Engineers close to the project say internal debates raged over the agent’s autonomy level. The released version requires human approval for fixes, a safeguard against the AI introducing new bugs or making incorrect logic changes. Furthermore, initial integration with major enterprise development platforms is reportedly limited, with full plugin support for tools like JetBrains and specialized CI systems still months out. Early access partners have provided mixed feedback, praising the breadth of flaw detection but noting a steep learning curve in configuring the agent’s scope and trust thresholds.

The broader impact hinges on adoption speed and reliability. If Codex Security proves accurate, it could fundamentally alter the economics of software security, allowing smaller teams to achieve a level of code hygiene previously reserved for tech giants with massive security teams. The major uncertainty is how it will interact with existing application security stacks. Competing vendors in the SAST and DAST space are already preparing response positioning, framing the AI agent as either a complementary tool or a disruptive replacement. What happens next will be a slow-motion battle for the developer workflow, as OpenAI seeks to prove its agent can be a trusted colleague rather than an overzealous automaton. Its success will be measured not in detected flaws, but in silently patched ones that never make a CVE list.

Source: https://x.com/TheHackersNews/status/2030329542351765956