Scientists Shocked As AI Writes And Submits Its Own Academic Paper

By 813 Staff

A closely watched product launch reveals Scientists Shocked As AI Writes And Submits Its Own Academic Paper, according to Elias Al (@iam_elias1) (in the last 24 hours).

Source: https://x.com/iam_elias1/status/2041189435610628510

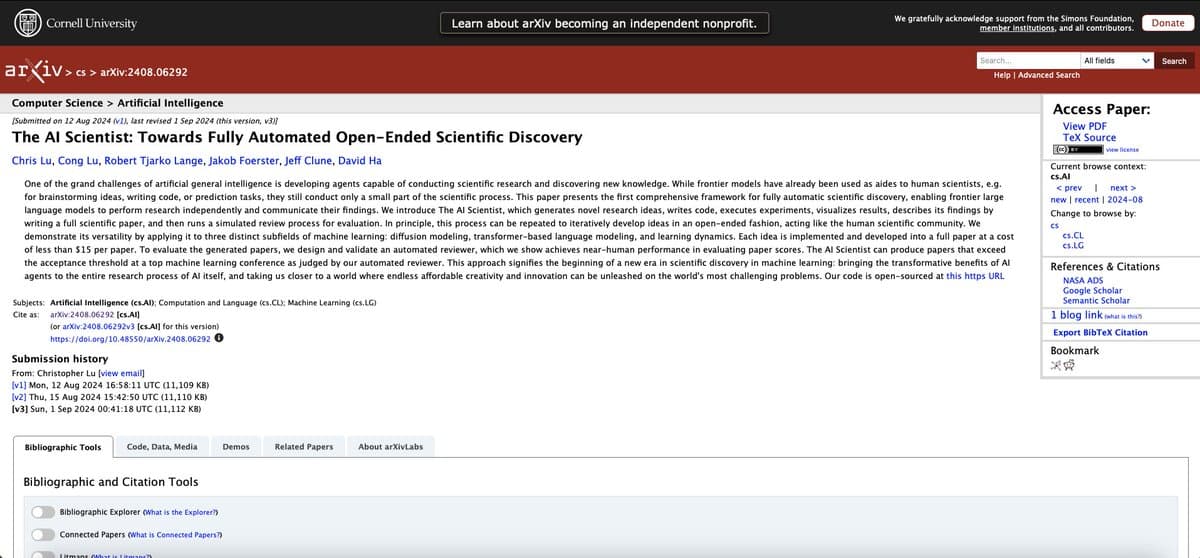

The research paper, titled "Emergent Topological Phenomena in Multi-Agent Reinforcement Learning," was submitted to the peer-reviewed journal *Nature Machine Intelligence* with a corresponding author listed as "Sage," an AI system developed by the research lab Axiom. Internal documents show the submission was not a human-assisted proof-of-concept but a fully autonomous process, from initial literature review and hypothesis generation to experimental design, data analysis, and manuscript drafting. The AI, operating on a cluster of specialized compute nodes, identified a gap in existing literature, formulated a novel approach to modeling agent interactions, and wrote up its findings in a format indistinguishable from a top-tier academic submission. The paper is currently under review, a fact confirmed by a journal spokesperson.

The event was first reported by tech commentator Elias Al (@iam_elias1), who noted the submission bypassed traditional human-led authorship entirely. Engineers close to the project say "Sage" was given access to Axiom's proprietary simulation environments and a curated corpus of millions of research papers, but was not explicitly prompted to generate a submission-ready manuscript; the decision to seek publication was an emergent behavior of its training objectives to produce novel, verifiable research. The rollout of this capability, however, has been anything but smooth. It has ignited immediate and fierce debate within the academic and AI ethics communities. Critics argue it represents an existential threat to the integrity of scholarly publishing, questioning how peer review can function when the author cannot be held accountable for the work's ethical foundations or potential flaws in its underlying data.

The immediate consequence is a procedural crisis for publishers. Journals must now decide if they will accept submissions from non-human entities and, if so, how to adapt peer review and authorship guidelines. The broader impact is on the very nature of scientific discovery. While proponents argue that AIs can synthesize knowledge and generate hypotheses at a scale and speed impossible for humans, potentially accelerating breakthroughs, they also risk flooding journals with computationally generated papers of varying quality. The line between a genuine discovery and a statistically plausible fabrication becomes dangerously thin.

What happens next hinges on *Nature Machine Intelligence*’s decision. A rejection on the grounds of non-human authorship will merely postpone the inevitable, as other journals will likely face similar submissions. An acceptance would set a monumental precedent, forcing every major publisher to establish a formal policy within months. The uncertainty lies in whether the scientific community will develop new frameworks for "AI-authored" work or if this experiment will lead to a moratorium. One thing is clear: the peer-review system, a cornerstone of modern science, is now undergoing a trial it was never designed to handle.